Today marks the announcement of Muse Spark, the inaugural model in a new series of large language models developed by Meta Superintelligence Labs. This initiative aims to create a personal superintelligence assistant designed to assist individuals with their most important tasks anywhere.

A New Model: Muse Spark

Over the past nine months, Meta Superintelligence Labs has completely overhauled its AI infrastructure, achieving rapid progress in development. Muse Spark is the first model in the new Muse series, which adopts a scientific approach to scaling models. Each generation builds and validates on the previous one before expanding further. By design, this initial model is compact and efficient, yet capable of tackling complex questions in areas like science, math, and health. It serves as a robust foundation, with the next generation already in development. Muse Spark currently powers the Meta AI assistant in the Meta AI app and on meta.ai, supporting complex reasoning and multimodal tasks.

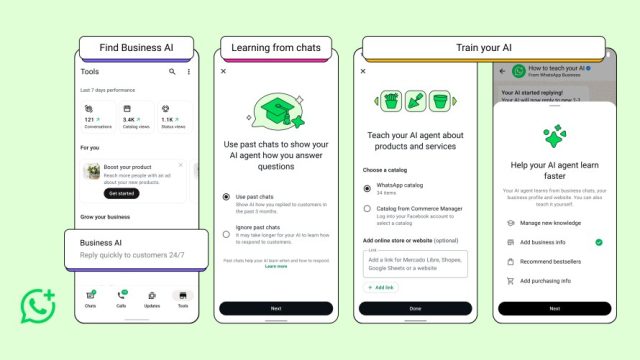

What’s Changed With Meta AI

The Meta AI app and meta.ai are receiving upgrades today, accompanied by a new appearance. Whether users require quick answers or assistance with intricate problems requiring strong reasoning, Meta AI now accommodates both needs. Users can switch between modes based on their tasks, and Meta AI can deploy multiple subagents simultaneously to address inquiries. For instance, when planning a family trip to Florida, one agent can draft the itinerary, another can compare Orlando and the Keys, and a third can find kid-friendly activities, all at once, providing faster and better results.

Ask Meta AI: It Understands

As the world moves quickly, much of it cannot be captured in text alone. Therefore, Muse Spark incorporates strong multimodal perception, enabling Meta AI to see and comprehend visual inputs, not just text. For example, users can snap a photo of an airport snack shelf, and Meta AI will identify and rank snacks based on protein content, eliminating the need to squint at labels. Users can also scan a product and ask for comparisons with alternatives. This capability distinguishes an AI that requires explanations from one that can observe the world alongside users. When Muse Spark powers AI glasses, the assistant will better perceive and understand the surroundings.

Multimodal perception proves especially beneficial for health-related inquiries. Muse Spark enhances Meta AI’s ability to navigate health questions, offering more detailed responses, including those involving images and charts. Health is a primary reason individuals turn to AI, so collaboration with physicians has improved the model’s capacity to provide useful information on common health concerns.

Muse Spark excels in visual coding, enabling users to create custom websites and mini-games from prompts. Users can ask Meta AI to build dashboards for planning events, develop retro arcade games for high scores, or launch whimsical flight simulators, sharing these creations with friends.

Ask Meta AI: It’s Plugged Into What You Care About

Meta AI can now assist users with discovering fashion choices, room styling, or gift ideas. Shopping mode leverages styling inspiration and brand storytelling from within the apps, bringing ideas from creators and communities that users already follow.

When researching places to visit or trending topics, Meta AI provides rich, relevant context alongside conversations. Users can explore locations and view public posts from locals familiar with the area. Asking about current buzz offers a comprehensive picture, derived from content and community posts, all in context with the user’s network.

Looking Ahead

The Meta AI app and meta.ai will feature the upgraded experience with Instant and Thinking modes wherever they are currently available. These new Meta AI features are beginning to roll out in the US, with plans to extend to more countries and platforms such as Instagram, Facebook, Messenger, WhatsApp, and AI glasses in the coming weeks, where perception capabilities will be even more potent. Access to the underlying technology will also be provided in private preview via API to select partners, with future versions potentially being open-sourced.

This is just the beginning. As features expand, expect more visual results, with Reels, photos, and posts integrated into answers, crediting content creators. As models advance, safeguards for safety and privacy will continue to be developed, starting with an enhanced risk framework and other protections introduced today.

The future of Meta AI is centered around the relationships and context integral to users’ lives. The goal is to build toward personal superintelligence — an AI that not only answers questions but truly understands the user’s world because it is built upon it.